eBPF: From Kernel to Cloud, Episode 10

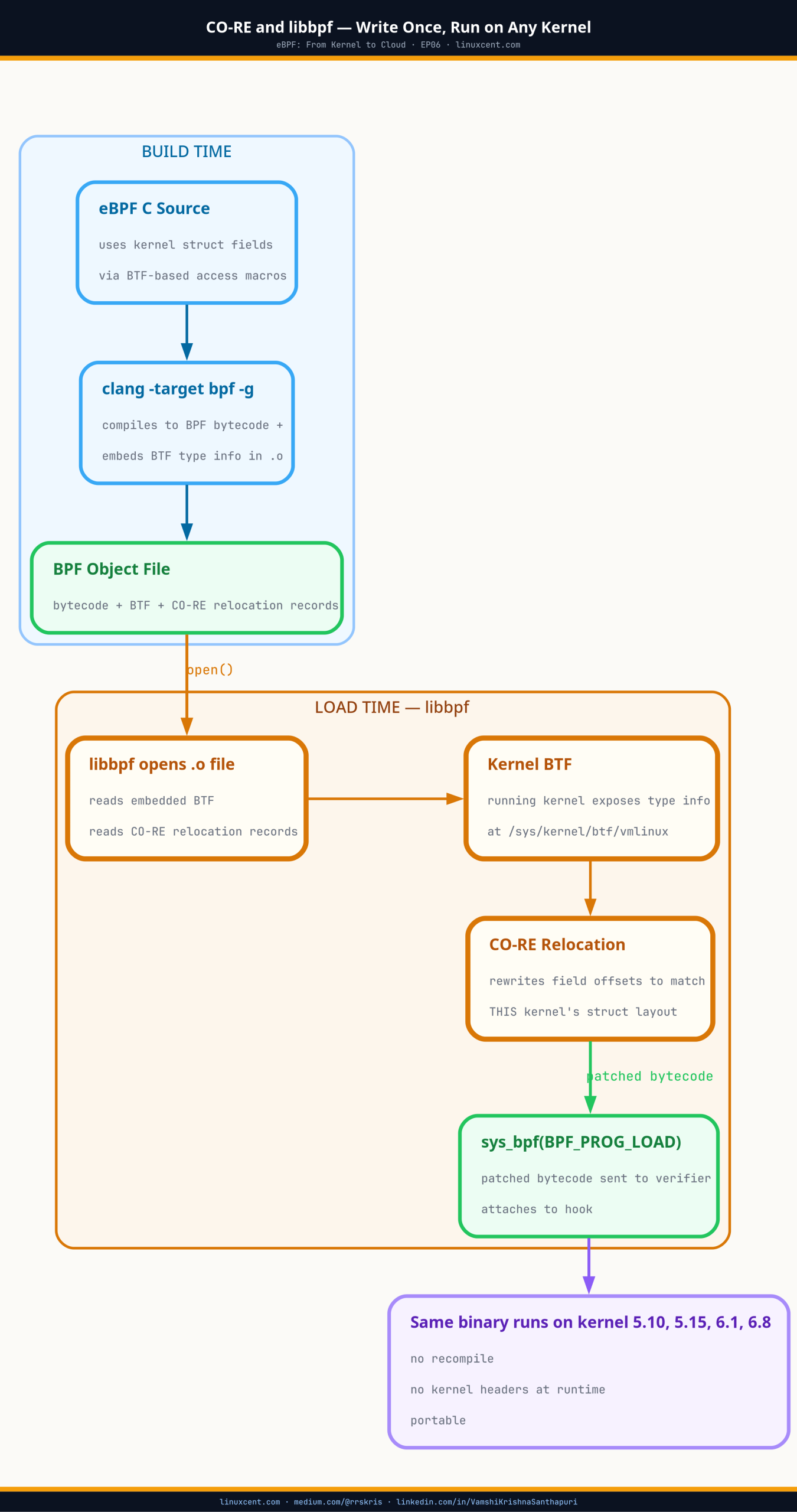

What Is eBPF? · The BPF Verifier · eBPF vs Kernel Modules · eBPF Program Types · eBPF Maps · CO-RE and libbpf · XDP · TC eBPF · bpftrace · Network Flow Observability · DNS Observability

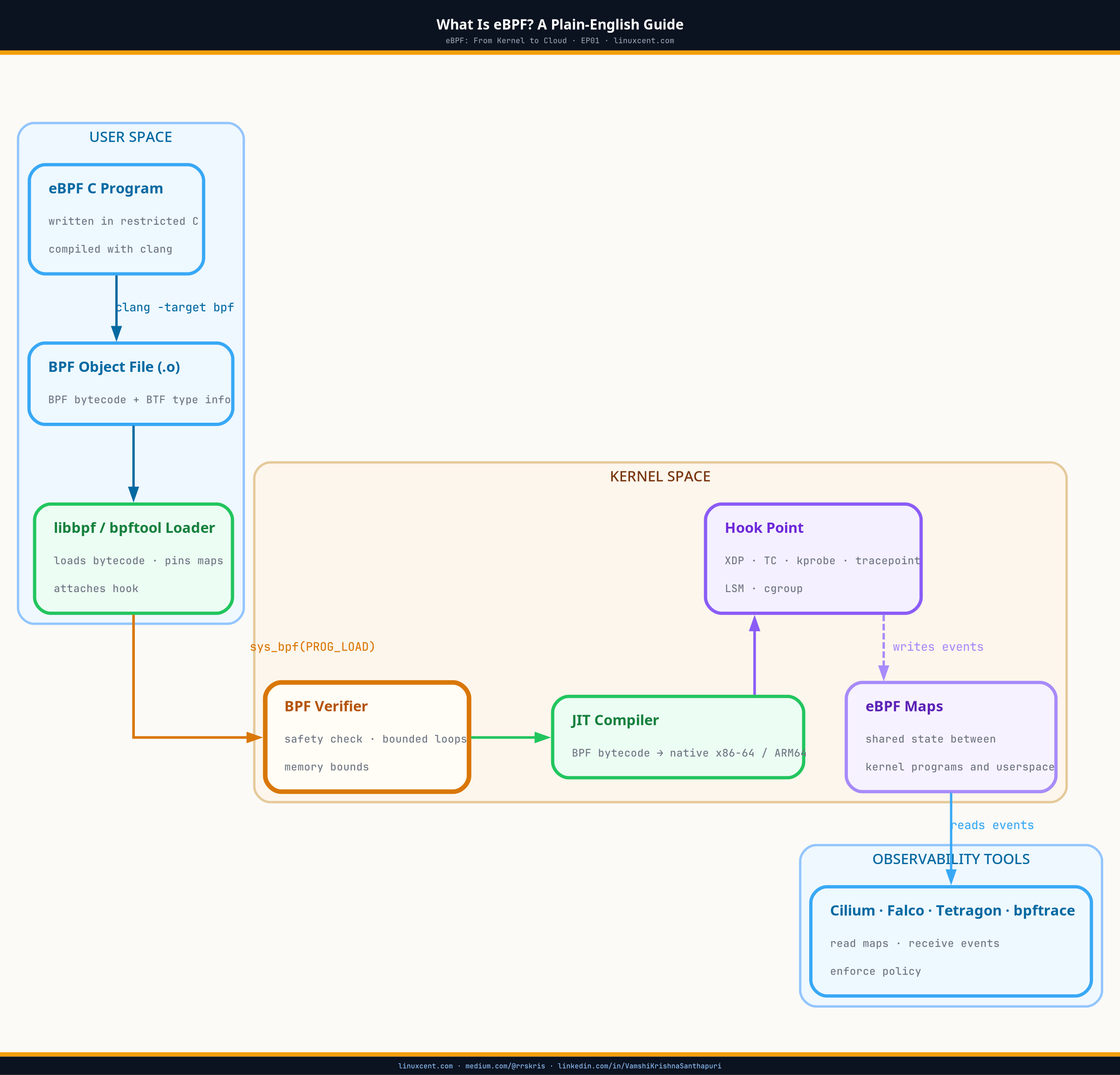

Architecture Overview

TL;DR

- Network flow observability with eBPF attaches persistent programs to TC hooks and records every connection attempt, retransmit, reset, and drop — continuously, with no sampling

(TC hook = Traffic Control hook: the point in the Linux network stack where eBPF programs intercept packets after ingress or before egress, tied to a specific network interface) - APM tools and service mesh telemetry are interpretations of what happened; kernel-level flow data from TC hooks is the raw event stream they all derive from

- Retransmit counters at the kernel level reveal congestion, half-open connections, and remote endpoint failures that application logs never surface

- Cilium’s Hubble and similar tools (Pixie, Retina) are eBPF flow exporters — they run TC programs, collect

perf_eventorringbufevents, and expose them over an API - You can verify what flow data a tool is actually collecting with four

bpftoolcommands — without reading documentation - Production caution: flow maps grow with the number of active connections; pin and bound your maps, and account for the per-packet overhead on high-throughput interfaces

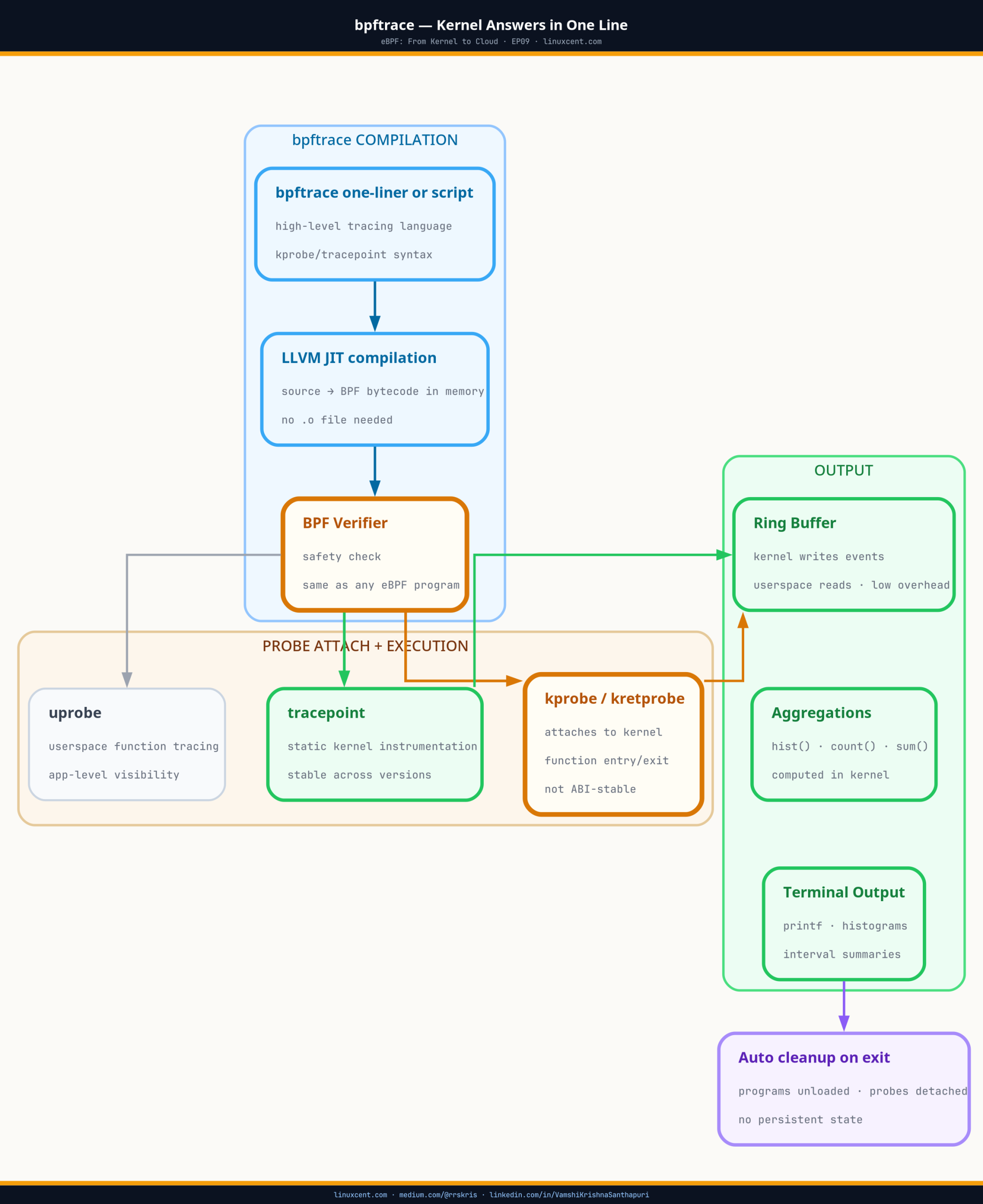

EP09 showed bpftrace as an on-demand kernel query tool — compile a question, get an answer, clean up. Network flow observability with eBPF is the persistent version: programs that stay attached to TC hooks across your entire fleet, recording every connection without waiting for you to ask. When a client reports intermittent failures that appear nowhere in application logs, that persistent record is what you query. This episode covers how that layer works and how to read it.

Quick Check: What Flow Data Is Your Cluster Already Collecting?

Before building anything new, check what’s already running. If you have Cilium, Pixie, or Retina on your cluster, eBPF flow programs are already attached:

# SSH into a worker node, then:

# What TC programs are attached to cluster interfaces?

bpftool net list

# Expected output on a Cilium node:

# xdp:

#

# tc:

# eth0(2) clsact/ingress prog_id 38 prio 1 handle 0x1 direct-action

# eth0(2) clsact/egress prog_id 39 prio 1 handle 0x1 direct-action

# lxc12a3(15) clsact/ingress prog_id 41 prio 1 handle 0x1 direct-action

# lxc12a3(15) clsact/egress prog_id 42 prio 1 handle 0x1 direct-action

# What maps are those programs holding state in?

bpftool map list | grep -E "flow|conn|sock|nat"

# Sample output:

# 24: hash name cilium_ct4_global flags 0x0

# key 24B value 56B max_entries 65536 memlock 4718592B

# 25: hash name cilium_ct4_local flags 0x0

# key 24B value 56B max_entries 8192 memlock 589824B

Each lxcXXXX interface is a pod’s veth pair. The TC programs on those interfaces are what Cilium uses to enforce NetworkPolicy and collect flow telemetry. If you see prog_id values on pod interfaces, your cluster is already doing kernel-level flow collection.

Not running Cilium? On a plain kubeadm or EKS node without a CNI that uses eBPF,

bpftool net listwill show no TC programs on pod interfaces — just whatever kube-proxy or the CNI plugin installed. You can still attach your own flow programs withtc qdisc add dev eth0 clsact— that’s the starting point this episode covers.

The client opened a ticket on a Tuesday afternoon. “Intermittent connection failures to the payment gateway. Started around 11 AM. Application logs say timeout. Retry logic is masking it for most users but the error rate is up 0.3%.”

I looked at the APM dashboard. The service showed elevated latency — p99 at 850ms versus a normal 120ms — but no hard errors at the application layer. The service mesh metrics showed the downstream call succeeding from the mesh’s perspective. The payment gateway team said their side looked clean.

Three tools. Three different answers. All of them interpreting the network. None of them were the network.

I ran:

bpftool map dump id 24 | grep -A5 "payment-gateway-ip"

The connection tracking map showed retransmit count 14 for a specific (src_ip, dst_ip, src_port, dst_port) tuple — the same 5-tuple, every 30 seconds, for 2 hours. The kernel was retransmitting. The TCP stack was compensating. The application was seeing sporadic success because retransmits eventually got through. The APM dashboard averaged that latency into a p99 and called it “elevated.”

The kernel had the truth. Everything above it was rounding.

Why Application-Level Metrics Miss What the Kernel Sees

Application metrics — APM spans, service mesh telemetry, load balancer health checks — operate at Layer 7. They measure round-trip time for complete requests, error codes returned, bytes transferred. They answer “did this request succeed?” not “what did the network do to make it succeed?”

The TCP stack underneath those requests handles retransmits, congestion window adjustments, RST packets, and half-open connections silently. From an application’s perspective, a request that required 3 retransmits before the ACK arrived looks identical to one that succeeded on the first attempt — slightly slower, but successful.

This is structural, not a tooling gap. Application-layer observability tools cannot see below their own protocol boundary. The kernel’s TCP implementation does not report upward when it retransmits. It just retransmits.

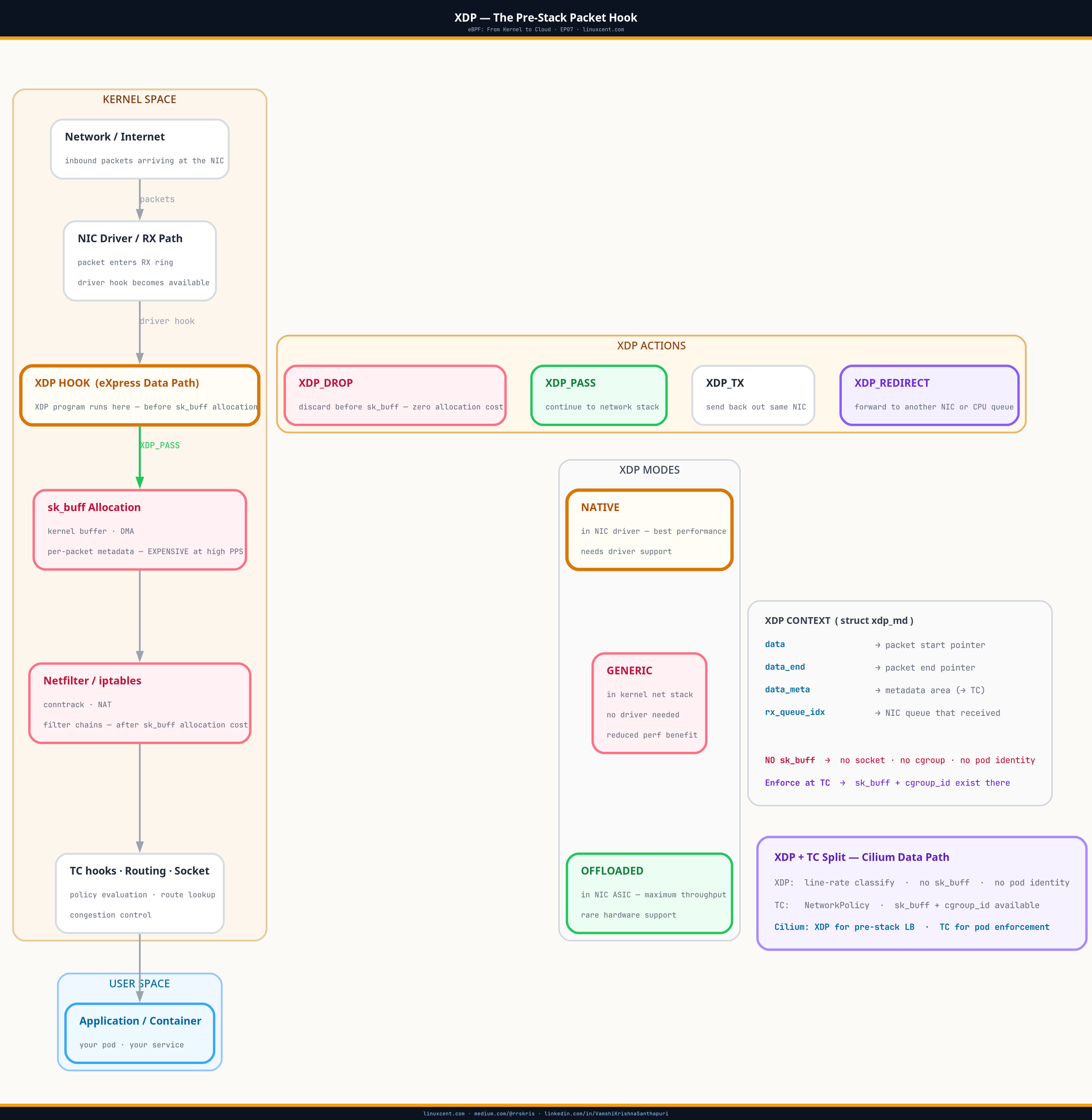

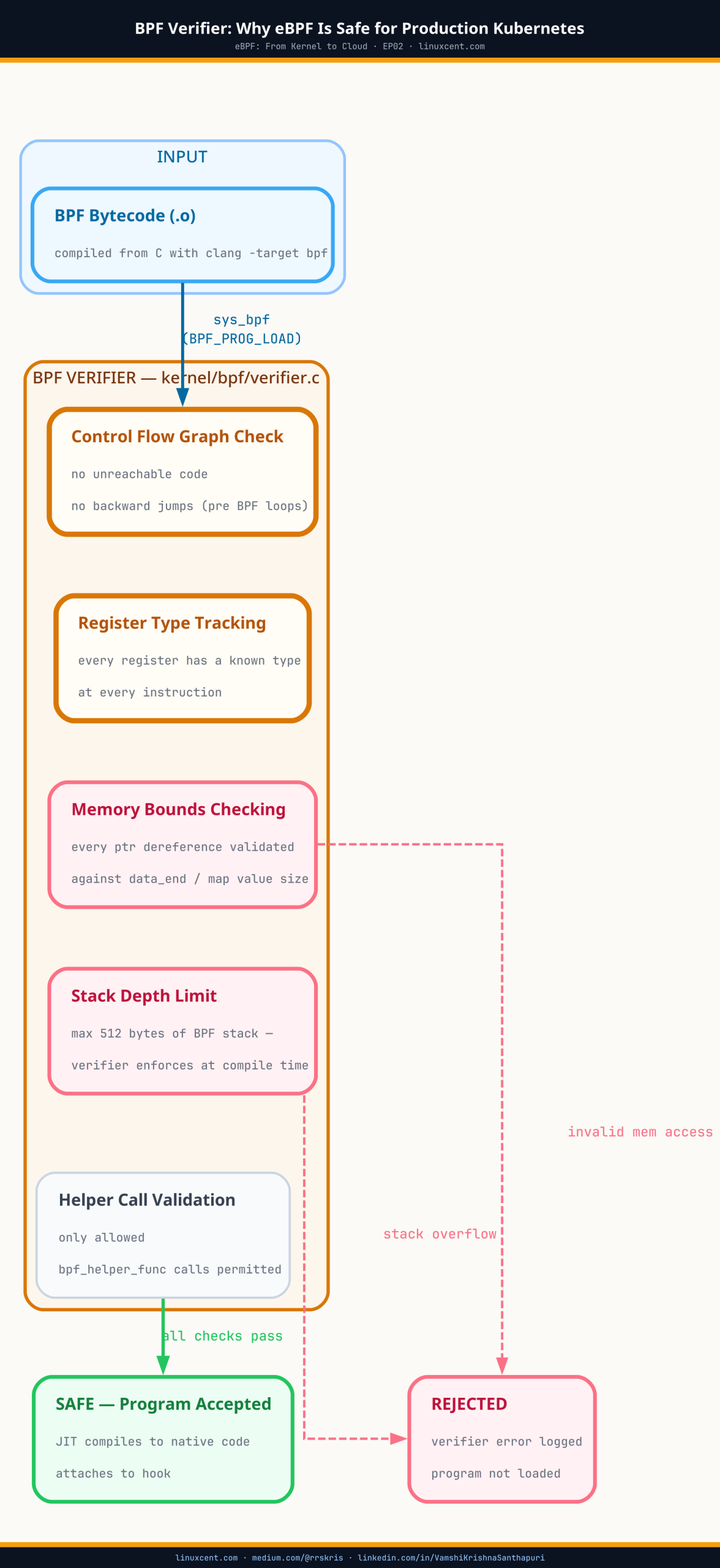

eBPF flow observability closes this gap by attaching programs directly to the network path — at the TC hook, which fires on every packet crossing a network interface — and recording what the kernel actually does.

How TC Hook Flow Programs Work

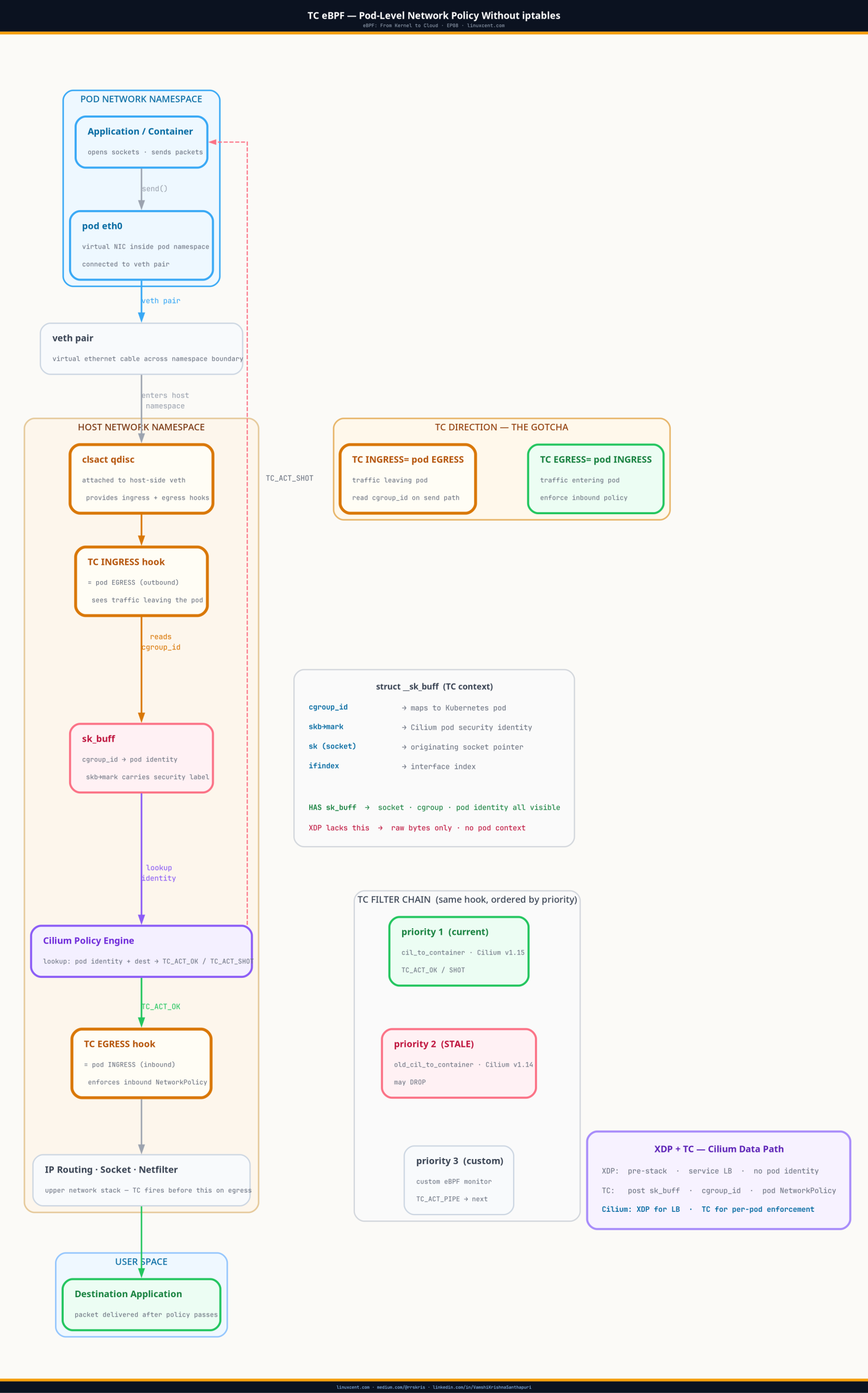

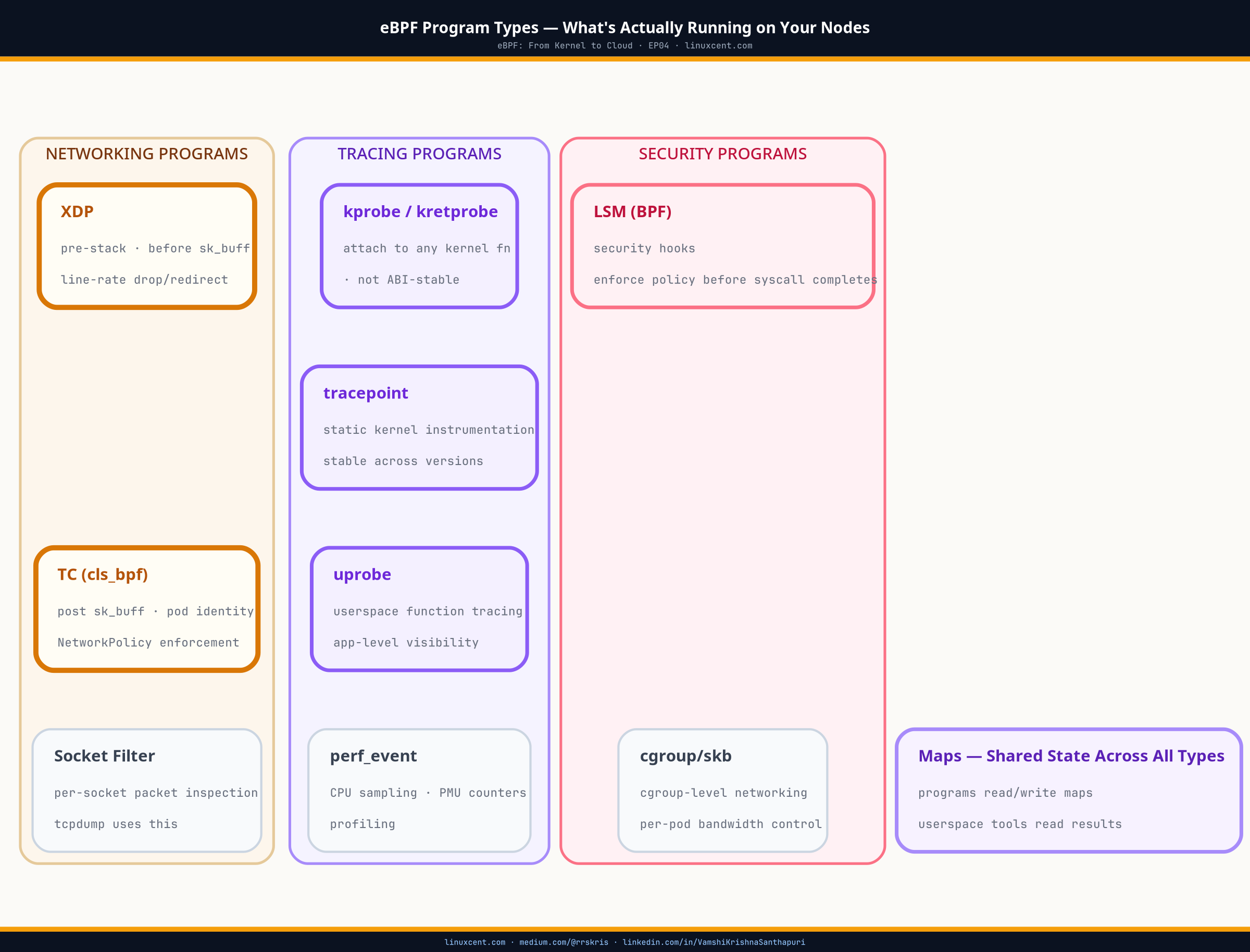

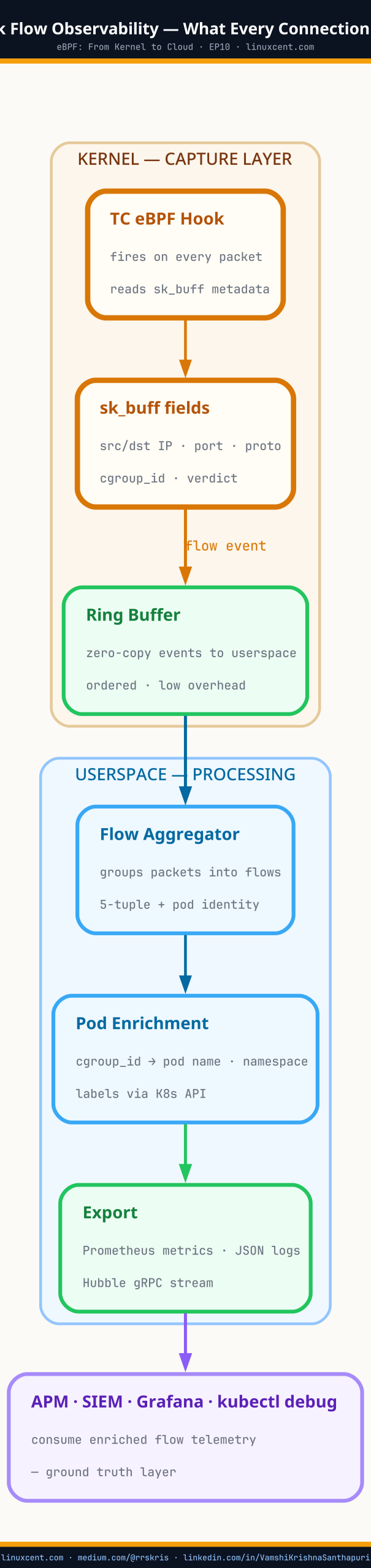

EP08 covered TC eBPF programs for pod network policy. Flow observability uses the same attachment point with a different purpose: instead of allowing or dropping packets, the program reads packet metadata and writes it to a map or ring buffer.

Pod sends packet

↓

veth interface (lxcXXXX)

↓

TC clsact/egress hook fires

↓

eBPF program reads:

- src IP, dst IP

- src port, dst port

- protocol

- packet size

- TCP flags (SYN, ACK, FIN, RST, retransmit bit)

↓

Writes event to ringbuf (or perf_event_array)

↓

Userspace consumer reads ringbuf

↓

Aggregates to flow record

↓

Exports to Hubble/Prometheus/flow store

ringbuf— a BPF ring buffer: a lock-free, memory-efficient queue shared between a kernel eBPF program and a userspace consumer. The kernel program writes events; the userspace reader drains them. Used instead ofperf_event_arrayin kernel 5.8+ because it avoids per-CPU memory waste and supports variable-length records. When you see Hubble exporting flows, it’s reading from a ringbuf that the TC program writes to.

The key structural property: the TC hook fires on every packet. Not sampled. Not throttled by default. Every SYN, every ACK, every RST, every retransmit. For flow observability, you typically aggregate at the program level — count packets and bytes per 5-tuple per second, rather than emitting an event per packet — but the raw visibility is there if you need it.

What Retransmit Telemetry Actually Reveals

Most flow observability implementations track TCP retransmits specifically because they are the clearest signal of network-layer trouble invisible to applications.

A TCP retransmit happens when a sender doesn’t receive an ACK within the retransmission timeout (RTO). The kernel resends the segment and doubles the timeout (exponential backoff). From the application’s perspective, the call takes longer. If retransmits keep clearing, the application sees success — just slow success.

perf_event— a kernel mechanism for collecting performance data. In eBPF,BPF_MAP_TYPE_PERF_EVENT_ARRAYlets kernel programs push variable-length records to userspace readers via a ring buffer per CPU. Older tools useperf_event_array; newer ones useBPF_MAP_TYPE_RINGBUF(single shared ring, more efficient). If you inspect an older version of Cilium’s flow exporter, you’ll seeperf_eventwrites; newer versions useringbuf.

To observe retransmits directly with bpftrace:

# Count retransmit events per destination IP — run for 60 seconds

bpftrace -e '

kprobe:tcp_retransmit_skb {

$sk = (struct sock *)arg0;

$daddr = ntop(AF_INET, $sk->__sk_common.skc_daddr);

@retransmits[$daddr] = count();

}

interval:s:60 { print(@retransmits); clear(@retransmits); exit(); }

'

Sample output:

Attaching 2 probes...

@retransmits[10.96.0.10]: 2 # DNS service — normal

@retransmits[172.16.4.23]: 847 # payment gateway endpoint ← problem here

@retransmits[10.244.1.5]: 1 # normal pod-to-pod traffic

847 retransmits to a single endpoint in 60 seconds. That’s not noise. That’s a congested or half-open connection being retried 14 times per second by the TCP stack while the application layer averages it into “elevated latency.”

How Cilium Hubble Collects Flow Data

Hubble is the flow observability layer built into Cilium. Understanding how it works makes you able to reason about what it can and cannot see — and how to verify what it’s actually collecting.

Hubble’s architecture:

Kernel (per node)

├── TC eBPF programs on all pod veth interfaces

│ write flow events → BPF ringbuf

│

└── Hubble node agent (userspace)

reads ringbuf

enriches with pod metadata (Kubernetes API)

exposes gRPC API

Cluster level

└── Hubble Relay

aggregates per-node gRPC streams

exposes single cluster-wide API

User tooling

└── hubble observe / Hubble UI / Prometheus exporter

The TC programs are writing raw packet events. The Hubble agent is the consumer that translates those events into Kubernetes-aware flow records — adding pod name, namespace, label, and policy verdict on top of the 5-tuple and TCP metadata the kernel provides.

To see what Hubble’s TC programs have attached:

# On any Cilium node

bpftool net list | grep lxc

# lxce4a1(23) clsact/ingress prog_id 61 ← Hubble flow program on pod interface ingress

# lxce4a1(23) clsact/egress prog_id 62 ← Hubble flow program on pod interface egress

# lxcf7b2(31) clsact/ingress prog_id 63

# lxcf7b2(31) clsact/egress prog_id 64

# Inspect one of those programs to confirm it's reading flow metadata

bpftool prog show id 61

# Output:

# 61: sched_cls name tail_handle_nat tag 3a8e2f1b4c7d9e0a gpl

# loaded_at 2026-04-22T09:13:45+0530 uid 0

# xlated 2144B jited 1382B memlock 4096B map_ids 24,31,38

# btf_id 142

sched_cls is the BPF program type for TC — confirming these are TC-attached flow programs. map_ids 24,31,38 — those are the maps this program reads from and writes to. You can dump any of them:

bpftool map dump id 24 | head -40

# Output (connection tracking entry):

# [{

# "key": {

# "saddr": "10.244.1.5", # ← source pod IP

# "daddr": "172.16.4.23", # ← destination IP

# "sport": 48291, # ← source port

# "dport": 443, # ← destination port

# "nexthdr": 6, # ← protocol: TCP

# "flags": 3 # ← CT_EGRESS | CT_ESTABLISHED

# },

# "value": {

# "rx_packets": 14832, # ← packets received

# "tx_packets": 14831, # ← packets sent

# "rx_bytes": 3841024, # ← bytes received

# "tx_bytes": 3756288, # ← bytes sent

# "lifetime": 21600, # ← seconds until entry expires

# "rx_closing": 0,

# "tx_closing": 0

# }

# }]

That’s the ground truth. Not an APM span. Not a service mesh metric. The actual per-connection counters the kernel is maintaining for that 5-tuple.

Writing a Minimal Flow Observer with bpftrace

You don’t need Cilium or Hubble to get flow telemetry. bpftrace can produce it directly on any node with BTF:

# Persistent flow table: connections + packet counts for 2 minutes

bpftrace -e '

kprobe:tcp_sendmsg {

$sk = (struct sock *)arg0;

$daddr = ntop(AF_INET, $sk->__sk_common.skc_daddr);

$dport = $sk->__sk_common.skc_dport >> 8;

@flows[comm, $daddr, $dport] = count();

}

interval:s:30 { print(@flows); clear(@flows); }

' --timeout 120

Sample output (every 30 seconds):

@flows[curl, 93.184.216.34, 443]: 12 # curl → example.com:443

@flows[coredns, 10.96.0.10, 53]: 341 # CoreDNS upstream queries

@flows[payment-svc, 172.16.4.23, 443]: 1204 # payment service → gateway

@flows[nginx, 10.244.2.3, 8080]: 89 # nginx → upstream pod

For retransmit tracking specifically:

# Combined flow + retransmit watcher — runs until Ctrl-C

bpftrace -e '

kprobe:tcp_retransmit_skb {

$sk = (struct sock *)arg0;

$daddr = ntop(AF_INET, $sk->__sk_common.skc_daddr);

@retx[comm, $daddr] = count();

}

kprobe:tcp_sendmsg {

$sk = (struct sock *)arg0;

$daddr = ntop(AF_INET, $sk->__sk_common.skc_daddr);

@sends[comm, $daddr] = count();

}

interval:s:10 {

printf("=== Retransmit ratio (last 10s) ===\n");

print(@retx);

print(@sends);

clear(@retx);

clear(@sends);

}

'

This gives you both the volume of sends and the retransmit count side by side — the ratio tells you whether retransmits are a rounding error (0.01%) or a signal (5%+).

⚠ Production Gotchas

Map size bounds matter. Connection tracking maps default to tens of thousands of entries. On nodes with high connection churn (serverless, short-lived batch jobs), maps can fill and start dropping new entries silently. Check bpftool map show id N for max_entries and monitor map utilization. Cilium exposes this as cilium_bpf_map_pressure in Prometheus.

Per-packet overhead on high-throughput interfaces. A TC program that fires on every packet on a 10Gbps interface processes millions of packets per second. Aggregating at the program level (count per 5-tuple rather than emit per packet) keeps overhead manageable — Cilium does this. A naive bpftrace one-liner that emits a perf event per packet will saturate the perf ring buffer under real load. Use ringbuf write paths or aggregate before emitting.

TC hook placement and direction confusion. Ingress TC on a pod’s veth (lxcXXXX) sees egress traffic from the pod’s perspective — because the host sees the packet arriving on the veth after the pod sent it. This reversal is consistent but confusing when you’re reading direction labels in flow records. EP08 covered this in detail for policy enforcement; the same asymmetry applies to flow data.

Retransmit counters reset on connection close. If you’re tracking retransmit totals for a long-lived connection, the count is stored in the kernel’s socket state and is cleared when the socket closes. For persistent tracking across reconnects, aggregate at the flow level in userspace before the connection closes.

Hubble flow visibility requires pod interfaces. Hubble only sees traffic that crosses a pod’s veth interface. Node-to-node traffic that doesn’t involve a pod (e.g., node SSH, kubelet-to-API-server on the node IP) is not captured by default. For host-level network observability, you need a TC program on the physical interface (eth0, ens3), not just on pod veth pairs.

Quick Reference

| What you want to see | Command |

|---|---|

| What TC programs are attached | bpftool net list |

| Which maps a program uses | bpftool prog show id N (check map_ids) |

| Connection tracking entries | bpftool map dump id N |

| Retransmits per destination | bpftrace -e 'kprobe:tcp_retransmit_skb { ... }' |

| Flow counts per process | bpftrace -e 'kprobe:tcp_sendmsg { @[comm, daddr] = count(); }' |

| Hubble flow stream (Cilium) | hubble observe --follow |

| Hubble flows for one pod | hubble observe --pod mynamespace/mypod --follow |

| Verify map pressure | bpftool map show id N (check max_entries vs entries) |

| Kernel function | What it marks |

|---|---|

tcp_sendmsg |

Data being sent on a TCP socket |

tcp_recvmsg |

Data being received on a TCP socket |

tcp_retransmit_skb |

A segment being retransmitted |

tcp_send_reset |

RST being sent |

tcp_fin |

Connection teardown initiated |

tcp_connect |

New outbound TCP connection attempt |

Key Takeaways

- Network flow observability with eBPF attaches TC programs that record every connection event continuously — not sampled, not throttled, not filtered by what the application reports

- Retransmit telemetry from

tcp_retransmit_skbreveals congestion and endpoint failures that are structurally invisible to application-layer monitoring tools - Cilium Hubble, Pixie, and Retina are all eBPF flow exporters — they run TC programs, drain a ringbuf, enrich with Kubernetes metadata, and expose the result over an API

- You can verify what any flow tool is actually collecting with

bpftool net list,bpftool prog show, andbpftool map dump— four commands, no documentation needed - Map sizing and per-packet overhead are the two production concerns; aggregate at the kernel level, bound your maps, and monitor map pressure

- The kernel’s connection tracking map is the ground truth. APM dashboards, service mesh metrics, and load balancer health checks are all interpretations of what that map contains

What’s Next

Flow observability tells you what connections exist. EP11 goes one level deeper: what names your pods are resolving those connections to. DNS is where a compromised workload first reveals itself — it queries a domain that has no business being queried from a production pod, and if you’re not watching the kernel-level DNS path, you won’t see it until after the damage.

DNS observability at the kernel level uses tracepoint hooks on the DNS syscall path — the same ground-truth approach as flow telemetry, but for name resolution: every query, every response, tied to the pod that made it, without deploying a sidecar.

Next: DNS observability at the kernel level — what your pods are actually resolving

Get EP11 in your inbox when it publishes → linuxcent.com/subscribe