eBPF: From Kernel to Cloud, Episode 9

What Is eBPF? · The BPF Verifier · eBPF vs Kernel Modules · eBPF Program Types · eBPF Maps · CO-RE and libbpf · XDP · TC eBPF · bpftrace**

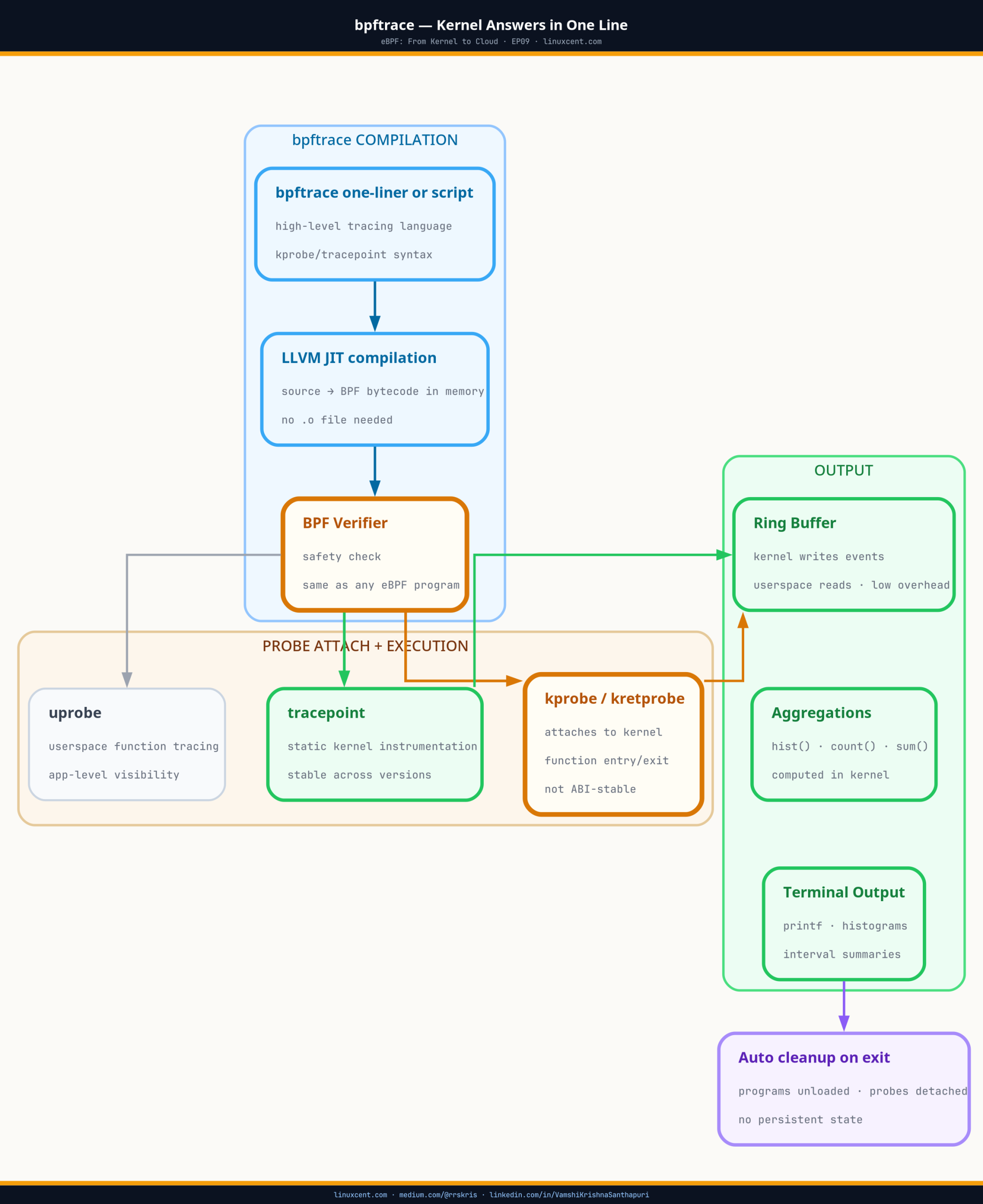

Architecture Overview

TL;DR

- bpftrace is an eBPF compiler, not a monitoring agent — every one-liner compiles, loads, runs, and cleans up a complete kernel program

(think of it likekubectl exec— but for asking the kernel a direct question, with no agent, no sidecar, no prior setup) - kretprobe and tracepoint cover most production debugging needs; use tracepoints for stability across kernel versions

- The security use cases are unique: kernel-level observation that an attacker inside a container cannot suppress

- Every connection, every file open, every process spawn — observable in real time with a single command, no prior instrumentation

- Production caution: high-frequency probes on hot paths add overhead; filter by pid/comm, use

--timeout, watch%si - Container PIDs are host-namespace PIDs in bpftrace — use

curtask->real_parent->tgidto correlate to container activity

bpftrace turns any kernel question into a one-liner — compiling, loading, and attaching a complete eBPF program in seconds, with no agents, no restarts, and no prior instrumentation on the node. When something is wrong on a node right now and you don’t know where to look, it’s how you ask the kernel a direct question. That’s what EP09 is about.

Quick Check: Is bpftrace Available on Your Node?

Before the one-liner toolkit — verify bpftrace is installed and working on a cluster node:

# SSH into a worker node, then:

bpftrace --version

# bpftrace v0.19.0 ← any version ≥ 0.16 supports the patterns in this episode

# Verify BTF is available (required for struct access one-liners)

ls /sys/kernel/btf/vmlinux && echo "BTF available"

# The simplest possible one-liner — count syscalls for 5 seconds

bpftrace -e 'tracepoint:raw_syscalls:sys_enter { @[comm] = count(); }' --timeout 5

Expected output (abridged):

Attaching 1 probe...

@[containerd]: 312

@[kubelet]: 841

@[node_exporter]: 203

@[sshd]: 47

Each line is a process name and how many syscalls it made in 5 seconds. If this runs and produces output, everything in this episode will work on your node.

Not on a self-managed node? EKS managed nodes and GKE nodes don’t have bpftrace pre-installed, but you can run it from a privileged debug pod:

kubectl debug node/<node-name> -it --image=quay.io/iovisor/bpftrace. The tool runs on the host kernel — you get full kernel visibility even from a pod.

A node in production started showing elevated TCP latency — p99 at 180ms, where p99 was normally under 10ms. The application logs were clean. The APM dashboard showed nothing unusual at the service level. CPU, memory, disk: all normal. The load balancer health checks were passing.

I had 12 minutes before the on-call escalation would have gone to the application team and started a war room.

I ran one command:

bpftrace -e 'kretprobe:tcp_recvmsg { @bytes[comm] = hist(retval); }' --timeout 10

Ten seconds of sampling. The histogram output showed a single process — backup-agent — receiving 4MB chunks at irregular intervals. Not the application. Not the service mesh. A backup agent that runs at the infrastructure layer, saturating the receive path with large reads during its scheduled window.

Found in 9 seconds. War room averted.

What made that possible is something most engineers don’t know about bpftrace: that one-liner is not a monitoring query. It’s a complete eBPF program — compiled, loaded into the kernel, attached to the tcp_recvmsg kernel return probe, run, and cleaned up — all in ten seconds. bpftrace is a compiler that happens to have a very convenient command-line interface.

What bpftrace Actually Is

bpftrace is not a monitoring tool. It’s an eBPF compiler with a high-level scripting language designed for one-shot investigation.

When you run bpftrace -e 'kretprobe:tcp_recvmsg { ... }', this is what happens:

Your one-liner

↓

bpftrace's built-in LLVM/Clang frontend

↓

eBPF bytecode (.bpf.o in memory)

↓

Kernel verifier validates the program

↓

JIT compiler compiles to native machine code

↓

Program attaches to tcp_recvmsg kretprobe

↓

Runs until Ctrl-C or --timeout

↓

Output printed, maps freed, program detached

The kernel doesn’t know bpftrace wrote the program. It’s the same path as Falco, Cilium, Tetragon — kernel program loaded via the BPF syscall, verified, JIT-compiled, attached to a probe. bpftrace just wraps that entire process in a scripting language that takes 30 seconds to write instead of an afternoon.

This is why bpftrace can answer questions that no other tool can: it compiles to a kernel-level observer that fires on any event in the kernel, on any process, on any container — without any prior instrumentation.

The Four Probe Types You’ll Use Most

bpftrace supports 20+ probe types. These four cover 90% of production debugging:

kprobe / kretprobe — Kernel Functions

Attaches to the entry (kprobe) or return (kretprobe) of any kernel function. The most powerful probes for understanding what the kernel is actually doing.

# Fire on every call to tcp_connect — who's making new TCP connections?

bpftrace -e 'kprobe:tcp_connect { printf("%s PID %d connecting\n", comm, pid); }'

# On return from tcp_recvmsg — how large are the reads per process?

bpftrace -e 'kretprobe:tcp_recvmsg { @[comm] = hist(retval); }'

# Count calls to vfs_write per process (file write activity)

bpftrace -e 'kprobe:vfs_write { @[comm] = count(); }'

Limitation: kernel functions are internal and can change between kernel versions. Use tracepoints (below) for stability when you can.

kprobe instability: A function targeted by a kprobe can be inlined by the kernel compiler — the compiler embeds the function’s code at its call sites with no separate entry point. When that happens, the kprobe silently fires on nothing. Verify before relying on one:

bpftrace -l 'kprobe:function_name'— empty response means it was inlined. Use a tracepoint equivalent instead.

tracepoint — Stable Kernel Trace Points

Tracepoints are stable, versioned hooks explicitly placed in the kernel source. Unlike kprobes, they are part of the kernel’s public interface and guaranteed not to disappear between versions. Use these for anything you need to work reliably across a fleet with mixed kernel versions.

# Every file open — process name + filename

bpftrace -e 'tracepoint:syscalls:sys_enter_openat {

printf("%s %s\n", comm, str(args->filename));

}'

# Every outbound connect — process, destination IP and port

bpftrace -e 'tracepoint:syscalls:sys_enter_connect {

printf("%-16s %-6d\n", comm, pid);

}'

# List all available tracepoints (hundreds)

bpftrace -l 'tracepoint:syscalls:*' | head -30

uprobe — Userspace Function Probes

Attaches to a specific function in a userspace binary or library. Useful for observing application behaviour without recompiling.

# What bash commands are being typed on this node?

bpftrace -e 'uprobe:/bin/bash:readline { printf("%s\n", str(arg0)); }'

# Python function calls

bpftrace -e 'uprobe:/usr/bin/python3:PyObject_Call { printf("Python call: pid %d\n", pid); }'

From a security standpoint: this is how you observe what an attacker is typing in an interactive shell they’ve obtained on your node — in real time, from the kernel, without touching the terminal session.

interval — Periodic Sampling

Runs a block of code on a fixed interval. Used for aggregation and periodic stats.

# Print the top file-opening processes every 5 seconds

bpftrace -e '

kprobe:vfs_open { @[comm] = count(); }

interval:s:5 { print(@); clear(@); }

'

The One-Liner Toolkit: Runnable Right Now

These run on any Linux node with BTF (kernel 5.8+, Ubuntu 20.04+, most managed K8s nodes):

# What files is every process opening right now? (30-second view)

bpftrace -e 'tracepoint:syscalls:sys_enter_openat {

printf("%-16s %s\n", comm, str(args->filename));

}' --timeout 30

# Who is making DNS queries? (catches queries from any container, no sidecar needed)

bpftrace -e 'tracepoint:net:net_dev_xmit {

if (args->skbaddr->protocol == 0x0800) printf("%s\n", comm);

}'

# Latency histogram for all read() syscalls — find the slow process

bpftrace -e '

tracepoint:syscalls:sys_enter_read { @start[tid] = nsecs; }

tracepoint:syscalls:sys_exit_read {

$latency = nsecs - @start[tid];

@latency[comm] = hist($latency);

delete(@start[tid]);

}' --timeout 15

# Which process is using the most CPU right now? (99Hz sampling)

bpftrace -e 'profile:hz:99 { @[comm] = count(); }' --timeout 10

# Real-time syscall frequency — find unusual process activity

bpftrace -e 'tracepoint:raw_syscalls:sys_enter { @[comm, args->id] = count(); }' --timeout 10 \

| sort -k3 -rn | head -20

# New TCP connections in the last 30 seconds — source and dest

bpftrace -e 'kprobe:tcp_connect {

$sk = (struct sock *)arg0;

printf("%-16s → %s:%d\n", comm,

ntop(AF_INET, $sk->__sk_common.skc_daddr),

$sk->__sk_common.skc_dport >> 8);

}' --timeout 30

# What is a specific PID doing? (replace 12345)

bpftrace -e 'tracepoint:syscalls:sys_enter_openat /pid == 12345/ {

printf("%s\n", str(args->filename));

}'

Each of these compiles and loads in under 2 seconds. They leave no persistent state. When they exit, the kernel reverts to exactly the state it was in before.

The Security Use Cases

Watching an Active Session

If you suspect a process is running commands you didn’t deploy:

# See every bash command on this node in real time

bpftrace -e 'uprobe:/bin/bash:readline { printf("%s %s\n", comm, str(arg0)); }'

# Every process spawn — PID, parent, command

bpftrace -e 'tracepoint:syscalls:sys_enter_execve {

printf("%-6d %-6d %s\n", pid, curtask->real_parent->tgid, str(args->filename));

}'

This is the kernel-level version of watching /var/log/auth.log — except it can’t be suppressed by an attacker who has root, because the probe runs in kernel space. An attacker who has compromised a container with root inside the container cannot prevent a bpftrace program on the host from observing their syscalls.

Detecting Unexpected Network Activity

# Any process making a connection to a non-standard port

bpftrace -e 'kprobe:tcp_connect {

$sk = (struct sock *)arg0;

$port = $sk->__sk_common.skc_dport >> 8;

if ($port != 80 && $port != 443 && $port != 53) {

printf("%-16s port %d\n", comm, $port);

}

}'

# DNS queries to non-standard resolvers (anything not on port 53)

bpftrace -e 'tracepoint:syscalls:sys_enter_sendto {

if (args->addr->sa_family == 2) {

printf("%-16s → %s\n", comm, str(args->addr));

}

}'

Watching File Access on Sensitive Paths

# Any access to /etc/passwd, /etc/shadow, /root/

bpftrace -e 'tracepoint:syscalls:sys_enter_openat {

if (str(args->filename) == "/etc/passwd" ||

str(args->filename) == "/etc/shadow") {

printf("%-16s PID %-6d opened %s\n", comm, pid, str(args->filename));

}

}'

Production Gotchas

CPU overhead: bpftrace probes fire synchronously in the traced context. High-frequency probes on hot kernel paths (vfs_read, sys_enter_* without filtering) can add 10–20% overhead. Always test with --timeout and watch %si before running on a production node.

Maps grow unbounded by default: @[comm] = count() will accumulate an entry per unique comm value forever in the current session. Use clear(@) in an interval block, or set a key limit: @[comm] = count(); if (@[comm] > 100) { clear(@comm); }.

kprobe instability: Functions targeted by kprobes can be inlined by the compiler between kernel versions, making the probe silently ineffective. If a kprobe isn’t firing, verify the function exists: bpftrace -l 'kprobe:function_name'. If it returns nothing, the function was inlined. Use a tracepoint equivalent instead.

Container PIDs: PIDs inside a container are different from host PIDs. pid in bpftrace is the host namespace PID.

Container PID semantics: When a container shows PID 1 internally, the host kernel sees it as PID 8432 (or whatever was assigned). bpftrace’s

pidbuilt-in always gives you the host-namespace PID. To map a container’s PID to the host PID:cat /proc/<host-pid>/status | grep NSpid— the second value is the PID inside the container. Or usecurtask->real_parent->tgidin your probe to walk the process tree. This matters when you filter bypidin a one-liner and get no output — you may be filtering on the container-namespace PID instead of the host one.

BTF requirement: bpftrace requires BTF for struct field access ($sk->__sk_common.skc_daddr). If BTF is unavailable, struct access fails. Check /sys/kernel/btf/vmlinux exists before running struct-access one-liners.

Quick Reference

| Probe type | Syntax | Use for |

|---|---|---|

| kernel function entry | kprobe:function_name |

Function arguments |

| kernel function return | kretprobe:function_name |

Return value, latency |

| kernel tracepoint | tracepoint:subsys:name |

Stable, versioned hooks |

| userspace function | uprobe:/path/to/bin:function |

App-level observation |

| CPU sampling | profile:hz:99 |

Flamegraphs, hot code |

| interval | interval:s:N |

Periodic aggregation |

| process start | tracepoint:syscalls:sys_enter_execve |

New process detection |

| Built-in variable | Value |

|---|---|

pid |

Process ID (host namespace) |

tid |

Thread ID |

comm |

Process name (15 chars) |

nsecs |

Nanoseconds since boot |

curtask |

Pointer to task_struct |

retval |

Return value (kretprobe/tracepoint exit) |

args |

Probe arguments struct |

Key Takeaways

- bpftrace is an eBPF compiler, not a monitoring agent — every one-liner compiles, loads, runs, and cleans up a complete kernel program

- kretprobe and tracepoint cover most production debugging needs; use tracepoints for stability across kernel versions

- The security use cases are unique: kernel-level observation that an attacker inside a container cannot suppress, because the probe runs on the host in kernel space

- Every connection, every file open, every process spawn — observable in real time with a single command, no prior instrumentation

- Production caution: high-frequency probes on hot paths add overhead; filter by pid/comm, use

--timeout, watch%si

What’s Next

bpftrace answers questions you ask in the moment. EP10 covers what happens when you need those answers continuously — not as a one-shot investigation tool, but as persistent telemetry recording every network connection across your entire cluster.

Flow observability from TC hooks is the always-on version: a persistent eBPF program recording every connection attempt, every retransmit, every dropped packet — the ground truth layer that everything above it interprets. When your APM says “timeout” and the kernel says “retransmit storm to one specific endpoint,” the kernel is right.

Next: network flow observability at the kernel level

Get EP10 in your inbox when it publishes → linuxcent.com/subscribe