Introduction

2022 is the year Kubernetes dealt with its legacy. The Docker shim that everyone had been warned about for two years was actually removed. PodSecurityPolicy — the broken security primitive that clusters had depended on since 1.3 — was deleted. And eBPF started displacing iptables as the networking substrate.

These weren’t additions to Kubernetes. They were the removal of technical debt accumulated over eight years. And the migrations they forced were the most operationally significant events since RBAC went stable.

Kubernetes 1.24 — Dockershim Removed (May 2022)

The dockershim was removed in 1.24. The deprecation had been announced in 1.20 (December 2020) — 18 months of warning. It didn’t matter. Operators who hadn’t migrated still scrambled.

The actual migration was straightforward for most environments:

# On each node, before upgrading to 1.24:

# 1. Install containerd

apt-get install -y containerd.io

# 2. Configure containerd

containerd config default | tee /etc/containerd/config.toml

# Edit: set SystemdCgroup = true in runc options

# 3. Update kubelet to use containerd socket

# /etc/systemd/system/kubelet.service.d/10-kubeadm.conf

# Add: --container-runtime-endpoint=unix:///run/containerd/containerd.sock

# 4. Restart

systemctl daemon-reload && systemctl restart kubelet

What the migration revealed: how many teams were depending on the Docker socket being present on nodes. Tools that mounted /var/run/docker.sock to talk to the Docker daemon — build tools, CI agents, some monitoring agents — broke. The ecosystem had to adapt to nerdctl (containerd’s Docker-compatible CLI), Kaniko, Buildah, or mounting the containerd socket instead.

Other 1.24 highlights:

– Beta APIs disabled by default: New beta features would no longer be enabled automatically. This reversed a long-standing policy that had caused too many production clusters to accidentally pick up unstable features

– gRPC probes stable: Liveness and readiness probes could now use gRPC health checks natively — no more writing HTTP wrapper endpoints for gRPC services

– Non-graceful node shutdown alpha: Handle the case where the node disappears without the kubelet getting to gracefully terminate pods — stateful workloads on node failure

Kubernetes 1.25 — PSP Removed (August 2022)

PodSecurityPolicy was deleted in 1.25. Every cluster that was still using PSP had to migrate to Pod Security Admission (or OPA/Gatekeeper or Kyverno) before upgrading.

Pod Security Admission was GA in 1.25, ready to take over:

# Enforce restricted policy on a namespace

kubectl label namespace production \

pod-security.kubernetes.io/enforce=restricted \

pod-security.kubernetes.io/enforce-version=v1.25

# Test a pod against the policy without enforcing

kubectl label namespace staging \

pod-security.kubernetes.io/warn=restricted \

pod-security.kubernetes.io/audit=restricted

The dry-run modes (warn, audit) were critical for migration: you could enable them on namespaces and watch what would have been rejected before switching to enforce mode.

The real migration challenge was existing workloads running as root, with privileged security contexts, or with hostPath mounts. The restricted policy rejected all of these. Production applications that had been running for years under permissive PSP policies now failed validation.

Also in 1.25:

– Ephemeral containers stable: Attach a debug container to a running pod without restarting it

# Debug a running pod with no shell

kubectl debug -it nginx-pod --image=busybox:latest --target=nginx

- CSI ephemeral volumes stable

- cgroups v2 (unified hierarchy) support stable: Enables memory QoS, improved resource accounting

Kubernetes 1.26 — Structured Parameter Scheduling, Storage (December 2022)

1.26 focused on the scheduler and storage:

– Dynamic Resource Allocation alpha: A generalization of the device plugin API — allows requesting complex resources (GPUs, FPGAs, network adapters) with scheduling constraints. The foundation for AI/ML workload scheduling on heterogeneous hardware

– CrossNamespacePVCDataSource beta: Clone a PVC across namespaces — enables namespace-based data isolation while sharing data sets

– Pod scheduling readiness alpha: A pod can declare that it’s not ready to be scheduled until external conditions are met (data pre-loading complete, license validated, etc.)

– Removal of in-tree cloud provider code (beta, continued): A long-running effort to move cloud-provider-specific code out of the core Kubernetes binary

The Dynamic Resource Allocation feature deserves emphasis: it’s the mechanism that makes Kubernetes a serious platform for GPU scheduling in AI/ML workloads. Device plugins (the prior mechanism) had limitations — a pod either got a GPU or it didn’t. DRA allows richer resource semantics: this pod needs two GPUs on the same PCIe bus, or this pod needs a specific GPU model.

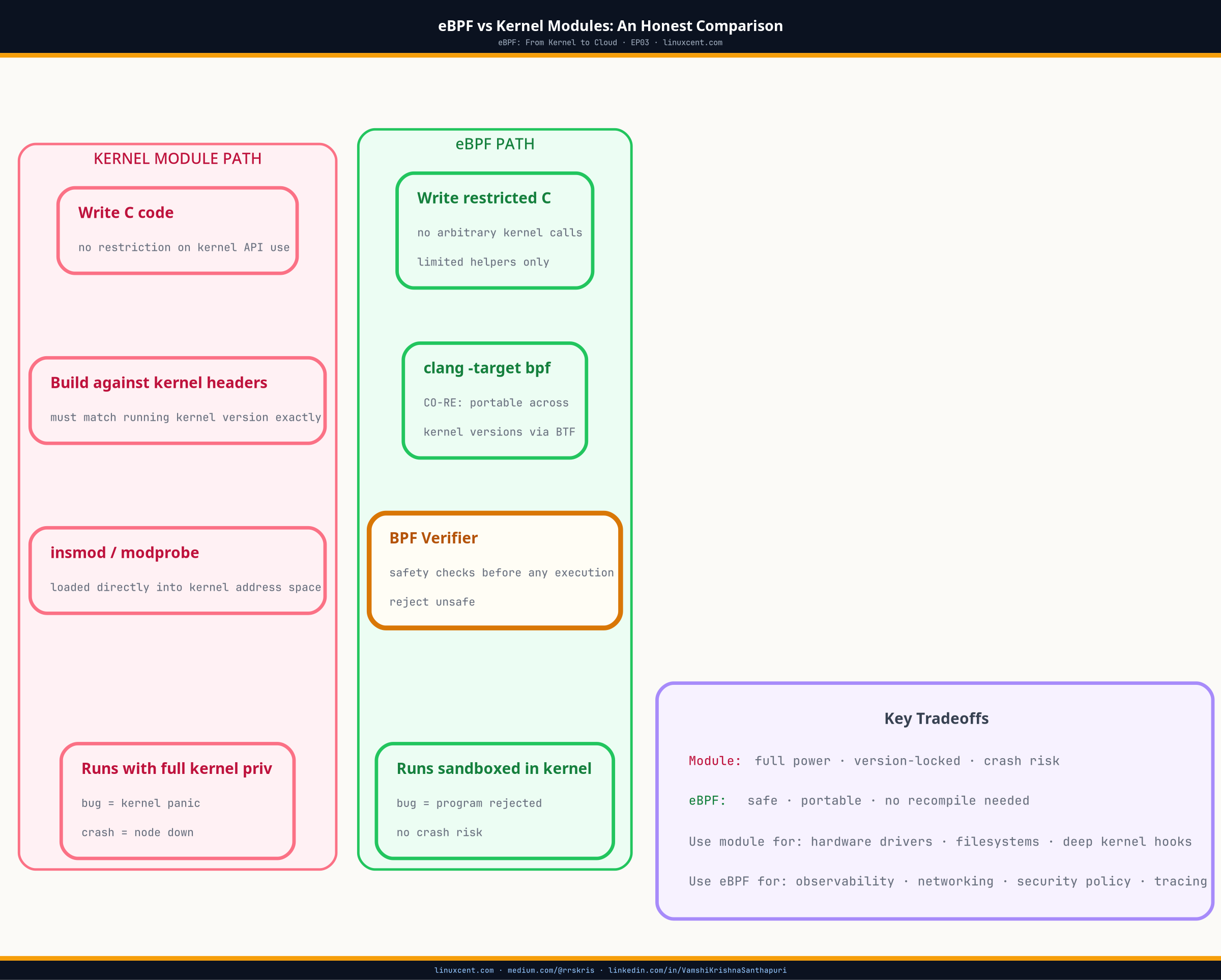

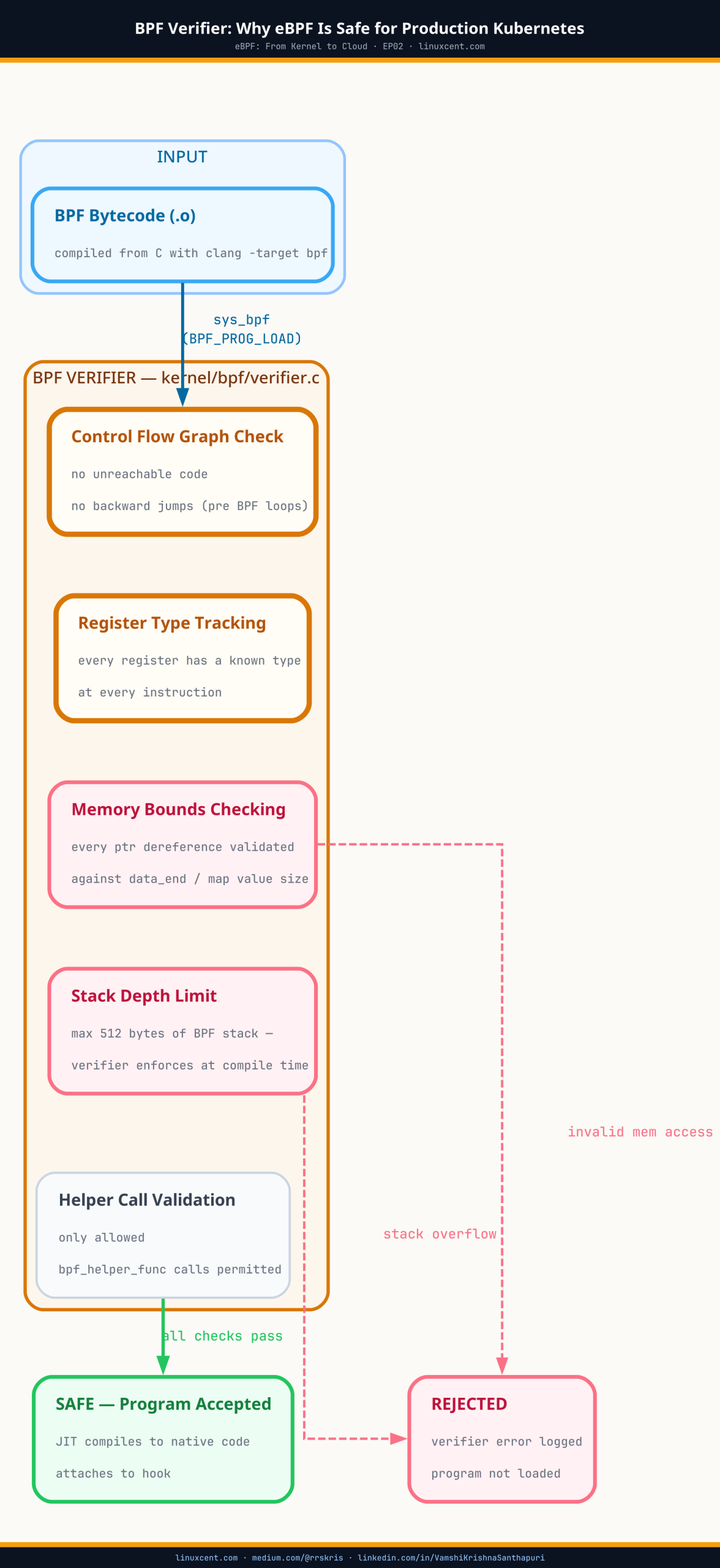

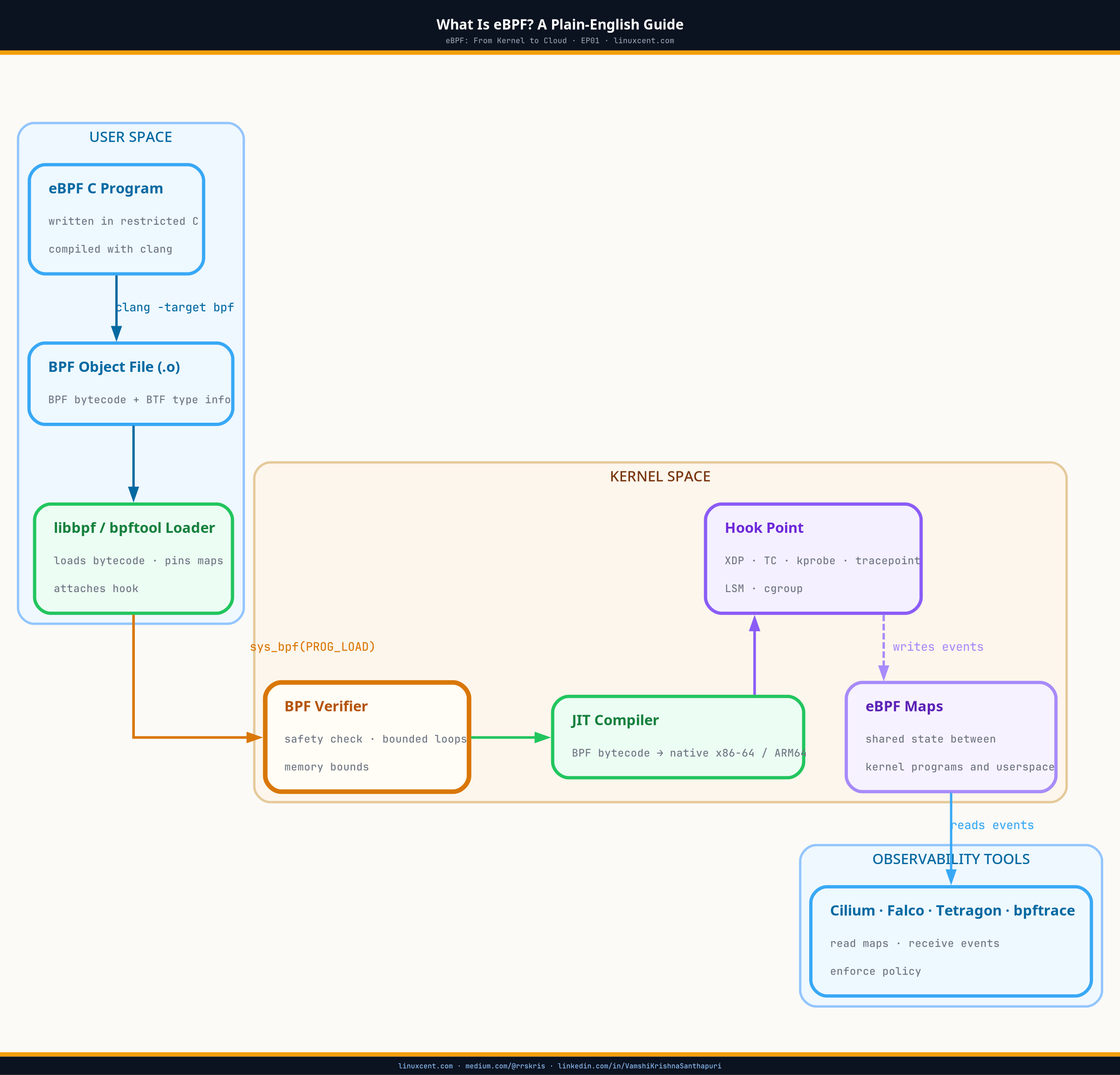

eBPF Reshapes Kubernetes Networking

The most significant architectural shift in Kubernetes networking during 2022–2023 wasn’t a Kubernetes release feature. It was the adoption of eBPF-based CNI solutions — primarily Cilium — as the default networking layer in major managed Kubernetes offerings.

The iptables problem: kube-proxy has been using iptables rules to implement Service routing since Kubernetes 1.0. Every Service adds iptables rules to every node. At 10,000 services, the iptables rule table on each node has hundreds of thousands of rules. Traversing these rules on every packet is O(n). Updating them requires locking and flushing. At scale, iptables becomes a bottleneck.

The eBPF solution: Cilium replaces kube-proxy entirely, implementing Service routing using eBPF maps — hash tables in kernel memory. Service lookup is O(1). Rule updates don’t require locking. Network policy enforcement happens in the kernel, before packets even reach the application.

# Check if Cilium is running in kube-proxy replacement mode

cilium status | grep "KubeProxy replacement"

# KubeProxy replacement: True

# eBPF-based service map — inspect directly

cilium service list

# ID Frontend Service Type Backend

# 1 10.96.0.1:443 ClusterIP 10.0.0.5:6443

# 2 10.96.0.10:53 ClusterIP 10.0.1.2:53, 10.0.1.3:53

Network policy enforcement: Cilium’s NetworkPolicy implementation enforces rules at the eBPF layer — packets that would be dropped by policy are dropped before they ever leave the kernel, before they touch the pod’s network stack. This is both faster and more secure than userspace enforcement.

Hubble: Cilium’s observability layer — built on the same eBPF probes — provides real-time network flow visibility, HTTP layer observability (which service called which endpoint, response codes), and DNS query logging without any application changes.

Major adoption milestones:

– GKE’s default CNI became Cilium (Dataplane V2) in 2021

– Amazon EKS added Cilium support

– Azure AKS enabled Cilium-based networking

– Google’s Autopilot clusters use Cilium exclusively

Kubernetes 1.27 — Graceful Failure, In-Place Resize Alpha (April 2023)

- In-Place Pod Vertical Scaling alpha: Change the CPU and memory resources of a running container without restarting the pod. For databases, JVM-based applications, and anything with warm caches, live resizing is a significant operational improvement

# Resize a container's CPU without restart

kubectl patch pod database-pod --type='json' \

-p='[{"op": "replace", "path": "/spec/containers/0/resources/requests/cpu", "value": "2"}]'

- SeccompDefault stable: Enable the default seccomp profile (RuntimeDefault) cluster-wide — a meaningful reduction in the default syscall attack surface for all pods

- Mutable scheduling directives for Jobs stable: Change node affinity and tolerations of pending (not yet running) Job pods

- ReadWriteOncePod PersistentVolume access mode stable: A volume can only be mounted by a single pod at a time — the correct semantic for databases with file-level locking requirements

The 1.5 Million Lines Removed: Cloud Provider Code Migration

One of the largest ongoing engineering efforts in Kubernetes 1.26–1.31 was the removal of in-tree cloud provider code. Every major cloud provider (AWS, Azure, GCP, OpenStack, vSphere) had code compiled directly into the Kubernetes control plane binaries.

The result: the Kubernetes API server and controller manager binaries contained code for AWS EBS volumes, GCE persistent disks, Azure managed disks, OpenStack Cinder — regardless of which cloud you were running on.

The migration moved this code to external Cloud Controller Managers (CCM) — separate processes that communicate with the API server like any other controller:

Before: kube-controller-manager (monolithic, includes all cloud providers)

After: kube-controller-manager (generic) + cloud-controller-manager (cloud-specific, external)

By 1.31, approximately 1.5 million lines of code had been removed from the core binaries, reducing binary sizes by approximately 40%. This is the largest refactor in Kubernetes history.

Gateway API: Replacing Ingress (2022–2023)

The Ingress API, which graduated to stable in 1.19, has fundamental limitations:

– No support for TCP/UDP routing (HTTP only)

– No traffic splitting between multiple backends

– No header-based routing

– Vendor-specific features implemented via annotations (not portable)

– No RBAC granularity within a single Ingress resource

Gateway API (kubernetes-sigs/gateway-api) was designed as the successor, with a role-based model:

GatewayClass → Managed by infrastructure provider (cluster admin)

Gateway → Managed by cluster operators

HTTPRoute → Managed by application developers

# Gateway — cluster operator configures the load balancer

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

name: production-gateway

spec:

gatewayClassName: nginx

listeners:

- name: https

port: 443

protocol: HTTPS

tls:

mode: Terminate

certificateRefs:

- name: tls-cert

---

# HTTPRoute — application team configures routing

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: api-route

spec:

parentRefs:

- name: production-gateway

rules:

- matches:

- path:

type: PathPrefix

value: /api/v2

backendRefs:

- name: api-v2-service

port: 8080

weight: 90

- name: api-v3-canary

port: 8080

weight: 10

Gateway API reached GA (v1.0) in October 2023, with the core HTTPRoute, Gateway, and GatewayClass resources graduating to stable.

Key Takeaways

- Dockershim removal in 1.24 completed the CRI migration that started in 1.5 — the Kubernetes runtime interface is now clean, with containerd and CRI-O as the standard runtimes

- PSP removal in 1.25 forced a migration that should have happened years earlier; Pod Security Admission’s simplicity is a feature, not a limitation

- eBPF-based networking (Cilium, Dataplane V2) is now the default in GKE and increasingly in EKS and AKS — O(1) service routing and kernel-level policy enforcement replace the iptables approach that dated to Kubernetes 1.0

- Dynamic Resource Allocation (1.26 alpha) is the foundation for AI/ML GPU scheduling — more capable than device plugins and designed for heterogeneous hardware requests

- Gateway API reaching GA replaced the annotation-driven, non-portable Ingress API with a role-oriented, extensible routing API

- The cloud provider code removal (1.5M lines) is the largest refactor in Kubernetes history, a prerequisite for a maintainable, leaner core

What’s Next

← EP05: Security Hardens | EP07: Platform Engineering Era →

Series: Kubernetes: From Borg to Platform Engineering | linuxcent.com